When an AI model generates information that sounds plausible but is factually incorrect, fabricated, or not supported by its source data.

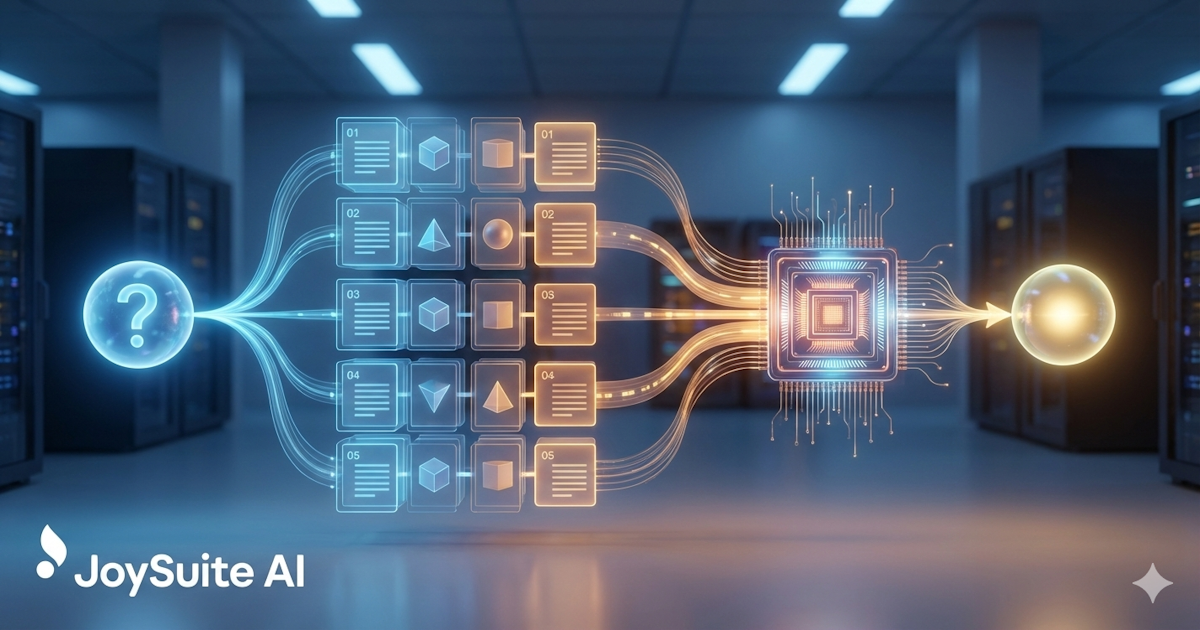

AI hallucinations occur because language models generate responses based on statistical patterns rather than factual understanding. The model may confidently present invented statistics, cite nonexistent sources, or combine real facts into false conclusions. In enterprise contexts, hallucinations are a serious concern because employees may act on incorrect information. Organizations mitigate this risk by using retrieval-augmented generation (RAG) to ground AI responses in verified company documents, implementing citation requirements, and establishing human review workflows for high-stakes decisions.